How We Got Here

Shae O said: “I don’t think we’ve fully understood what happens to a society that collectively can no longer believe what they’re seeing. Skepticism becomes the default. Deception becomes ordinary. We’re tending towards distorted hyper-personalized realities.”

I was working a thread on BlueSky yesterday on psychological manipulation and algorithmic contamination when I saw this statement by PhD graduate student, Shae O, whose essays on artificial intelligence provide a fertile ground for this kind of study, and I realized it dovetailed into that very same argument from a different perspective.

I realized what she was saying was true but I also realized the manipulation of human consciousness didn’t start with photography and the printing press but the effect that photography offered gave someone the capacity to manipulate someone beyond the range of the human voice. Print, photography expanded the range, power and permanence of rhetoric, for good or ill giving the power to manipulate someone over distance and depending on the media over time itself.

When photography emerged in the 19th century, it was treated as mechanical truth. The camera did not interpret — it captured. Except it didn’t. Daguerreotypes could be staged. Negatives retouched. People believed photos because they didn’t know the range of what was possible with them.

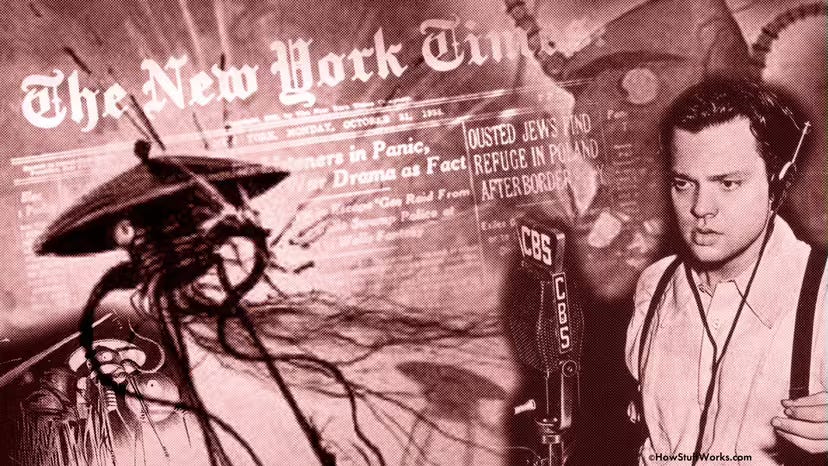

Even then: “seeing” required literacy. Each media format added to the challenge. Films demonstrated amazing trick photographs merging reality and photography using matte paintings or the demonstration of the power of radio, through the legendary broadcast of Orson Wells’ War of the Worlds.

It became a hard lesson for the public that spectacle could simulate reality — and that narrative framing could override sensory doubt. As the range of media expanded, the challenge of keeping the truth in the viewfinder became ever more difficult. By mid-20th century, propaganda films proved something darker: moving images could engineer belief at scale.

Radio broadcaster Orson Welles adapted the H.G. Wells’ book ‘War of the Worlds’ from Victorian England to present day New England gaining new listeners for his broadcast. Public Domain/©HowStuffWorks

Today we battle a host of media transformations from deepfakes, Sora’s generative video, AI voice clones, and private render farms recreating reality wholesale.

The real challenge is not simply that we can no longer trust what we see. It is that we are reshaping reality at a scale and speed previously unimaginable.

We can fabricate images, video, audio, and narrative in our living rooms. We can deploy bots and increasingly agentic AI to amplify those fabrications instantly. Billions may see them before any correction can be issued. And even when corrections are released, they rarely displace the falsehood. Bias sustains it. Identity protects it. Politics weaponizes it.

Once a lie hits the internet, it becomes sediment. It does not disappear. It layers. Add algorithmic manipulation and contamination, and we move from doubting what we see to losing any shared baseline epistemology.

Epistemology asks:

- What do you know?

- How do you know it?

- When did you know it?

- What is your source?

The main conceit of the famed X-Files series: The truth is out there. Somewhere…

Truth, Where Art Thou?

Digital environments are eroding this shared agreement on how truth is determined. Entire communities now operate within fragmented narrative ecosystems, cut off from accurate information. The Narrative War does not stay contained. It spills into adjacent domains — political reasoning, public health decisions, educational frameworks — cascading into the fundamental algorithmic thinking of individuals.

This is not abstract. When epistemology fractures, awareness fractures. Skepticism becomes ambient. Inescapable. Fatiguing.

When everything must be doubted:

- Truth competes with fabrication.

- Evidence competes with vibes.

- Institutions lose authority.

- Cynicism masquerades as intelligence.

A culture that defaults to disbelief does not become enlightened. It becomes exhausted. And exhaustion is politically useful.

As political strategist Steve Bannon stated in a 2018 Frontline interview:

“The opposition party is the media… and the media can only focus on one thing at a time. All we have to do is flood the zone.”

Flood the zone. Overwhelm. Saturate the information environment. When attention is fragmented and cognitive bandwidth is exhausted, deliberation collapses. The public reacts or withdraws. An exhausted society does not meaningfully govern itself. Media literacy therefore ceases to be optional enrichment. It becomes civic infrastructure. But even media literacy is insufficient.

We need critical thinking reinforced at scale. We need epistemic resilience. We need a practice of epistemic hygiene.

Epistemic hygiene is the disciplined, ongoing maintenance of one’s belief-forming processes in an information environment engineered for speed, manipulation, and emotional activation. It is the cognitive equivalent of sanitation: a recognition that exposure to distortion is constant, that contamination is probable, and that preservation of clarity requires habit rather than outrage.

In a digital ecosystem where images can be fabricated, narratives algorithmically amplified, and falsehood layered into permanent sediment before correction arrives, epistemic hygiene demands that we interrogate not only what we are being told, but how it reached us, who benefits from our belief, what incentives shaped its distribution, and what emotional levers are being pulled in its presentation.

Epistemic hygiene replaces reflexive trust and ambient cynicism alike with structured scrutiny, cross-verification, latency tolerance, and epistemic humility. Without it, skepticism curdles into exhaustion and shared reality fractures; with it, individuals retain the capacity to deliberate, disagree, and discover truth within systems designed to overwhelm them.

- People must be able to verify before reacting.

- To cross-check before sharing.

- To sit with ambiguity rather than collapsing into immediate certainty.

- To understand that digital environments are not neutral.

We must come to grips with this fundamental truth:

Our world of instant media does not exist to protect our cognition. It increasingly exists to exploit vulnerabilities in our thinking, manipulating dopamine, farming outrage and harvesting our user information while exploiting our doubts and fears.

Epistemic resilience is not an innate capacity. It is a learned discipline. Most people don’t know they need it. They are confident they can “tell what’s true.”

They do not understand how deeply their perception has been curated — through algorithms, repetition, aesthetic design, color theory, emotional priming, and engineered reinforcement loops. These sciences have been created, expanded and refined over generations, from religious preaching, to confidence men, to Joseph Goebbels, to Madison Avenue to social media algorithms.

The problem isn’t that seeing isn’t believing. The problem is believing now requires the skill to discern what is true and having the epistemic rigor to know how to discover where truth lies.

The Dancing House: Prague’s Polarizing “Drunk” Building — a real structure often mistaken for computer graphic art.

This essay explores an escalating epistemic crisis shaped by digital infrastructure and distributed fabrication. The following concepts anchor the argument:

1. Reality Fabrication at Scale – The democratization of image, audio, and video manipulation through accessible digital tools, bots, and agentic AI systems.

2. Speed and Amplification – Falsehoods can be created and distributed globally before correction is possible, creating informational sediment that cannot be fully removed.

3. Algorithmic Contamination – Platform systems curate perception through reinforcement loops that privilege engagement over accuracy.

4. Epistemological Fracture – The erosion of shared methods for determining truth: What do you know? How do you know it? What is your source?

5. Fragmented Narrative Ecosystems – Communities increasingly operate within self-reinforcing informational silos, weakening shared civic reasoning.

6. Ambient Skepticism – Doubt becomes constant and exhausting rather than strategic and clarifying.

7. Exhaustion as Political Utility – Information flooding, as openly described by political strategists, overwhelms public cognition and reduces deliberative capacity.

8. Epistemic Resilience – The learned discipline of verification, cross-checking, ambiguity tolerance, and critical thinking required for modern civic survival.

Epistemic Hygiene, Strange Attractor © Thaddeus Howze, 2026

Strange Attractor

In an age where images can be fabricated in basements and amplified by machines, truth no longer needs to collapse under censorship. It dissolves under abundance.

Strange Attractor examines the hidden architecture of narrative power, algorithmic influence, and epistemic erosion in the digital age. This is not a war over facts. It is a war over how facts are determined.

The battlefield is cognition. The prize is attention. Welcome to the Narrative War.

We chose to publish this essay because we have noted a disturbing trend in popular media news reporting sites: the tendency to serve the algorithms instead of the needs of the readers themselves. SCIFI.radio prides itself on our journalistic integrity. We would hope that more sites will take note of this and choose to stand closer to the light of the truth than whatever Google thinks will get the most clicks. – ed.

![]()

Thaddeus Howze is an award-winning essayist, editor, and futurist exploring the crossroads of activism, sustainability, and human resilience. He's a columnist and assistant editor for SCIFI.radio and as the Answer-Man, he keeps his eye on the future of speculative fiction, pop-culture and modern technology. Thaddeus Howze is the author of two speculative works — ‘Hayward's Reach’ and ‘Broken Glass.’

You must be logged in to post a comment.